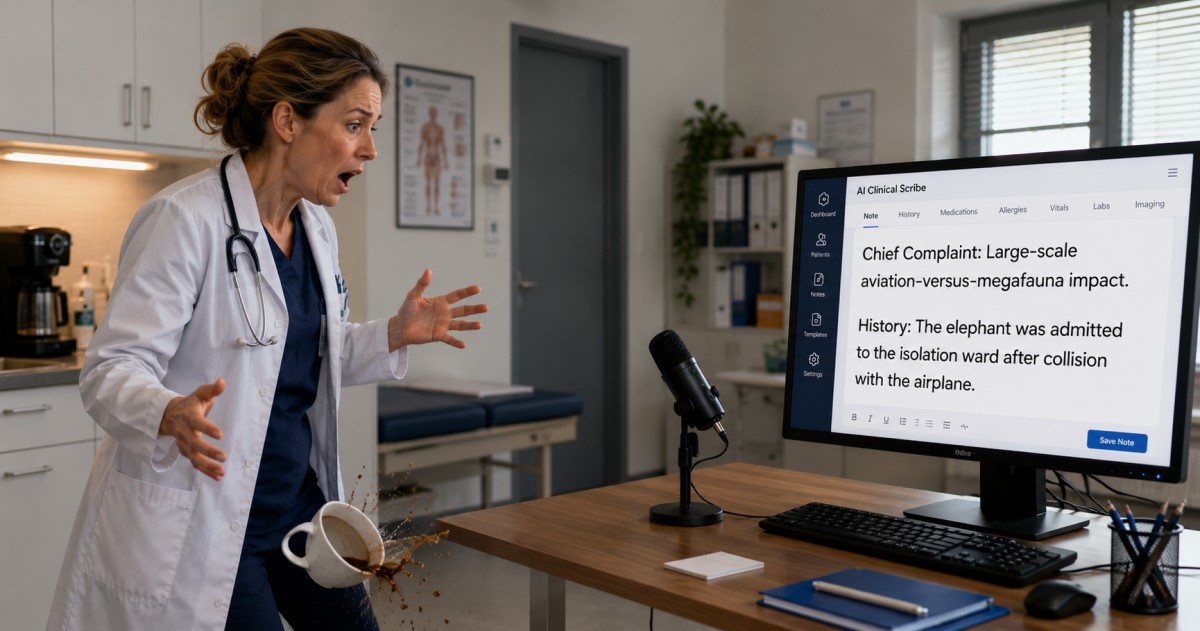

Room setup can make the note fail

A clinical scribe can become unsafe before the model even starts: poor audio can erase the clinical story.

Machine Generated Science • ISSN 3054-3991

DOI: 10.62487/saimsara5a879885

A clinical scribe can become unsafe before the model even starts: poor audio can erase the clinical story.

The risky output may not be a bizarre false sentence, but a missing history, decision, or operative detail.

This makes governance, not only accuracy, a core deployment risk for ambient clinical AI.

Unlock the full evidence map

The full evidence review, including the Introduction, Methods, Results, Discussion, Conclusion, figures, and complete reference index, opens after purchase or sign-in. The Evidence Object JSON is a separate machine-readable evidence product: a concentrated synthesis of results, topic-level evidence, and discussion across original and non-original studies. It can be directly input into your LLM, agent, or RAG workflow.